Researchers at Columbia created a first-of-its-kind robot hand that combines an advanced sense of touch and motor-learning algorithms that doesn’t rely on vision to manipulate objects. Robot hand dexterity has been elusive. Pick and place exists, but assembly, insertion, reorientation, packaging, and more still rely on human hands. Recent advances in sensing technology and machine-learning techniques to process the sensed data are rapidly changing the field of robotic manipulation.

The team centered on a difficult manipulation task—an arbitrarily large rotation of an unevenly shaped grasped object in hand while maintaining the item in a stable, secure hold. The effort requires constant repositioning of a subset of fingers while other fingers keep it steady. The hand performed the task without visual feedback, solely on touch sensing. It also worked without external cameras, and lighting conditions didn’t matter. Potential uses include logistics and material handling to ease up supply chain challenges.

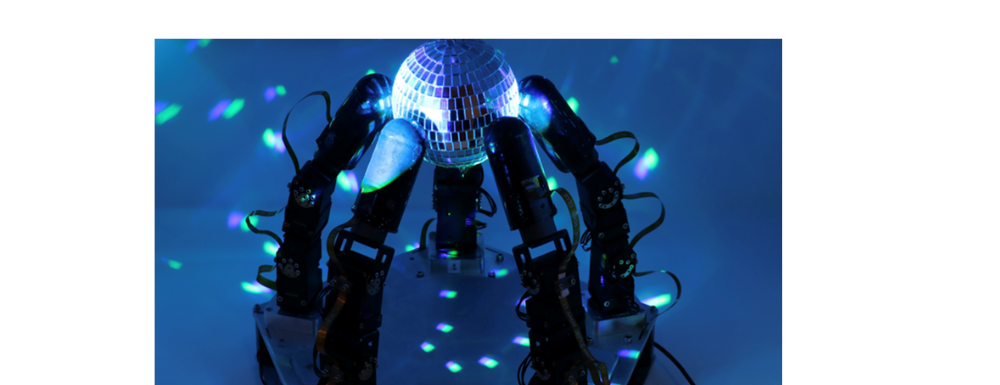

The robot hand features five fingers and 15 independently actuated joints. Each finger has touch-sensing technology. They used new methods for motor learning called deep reinforcement learning, augmented with new algorithms that they developed.

Using simulation for training, the robot completed approximately one year of practice in only hours of real-time. Researchers transferred this manipulation skill to the actual robot hand; the team’s robot hand reached initial dexterity goals.